The latent space is eating the world

So what I am talking about? Let the latent space can tell you:

Latent space is the space within machine learning models where information is represented in a way that is not directly interpretable by humans. It can be thought of as a new dimension, where features are mapped to points in the space, and similar features are close together. By feeding the model with specific prompts or inputs, we can navigate the latent space to find the information we are looking for.

Vector space is a general term in mathematics represent certain structures, and are used extensively within STARKs to represent polynomial fields.

These topics are inherently complex, but they are deeply intertwined in my daily work cycle. In this two-part series, I will be demonstrating how we are leveraging these emerging technologies to create exciting new possibilities in various fields, and how you can apply them in your own work.

Specialized machine learning models, such as text2img (Midjourney, Stablediffusion, DALL-E) and LLMs (chatGPT), and advances in cryptography will be key drivers of our species growth and development in the coming decade. You will use them thousands of times daily in the background of your daily work in a few years. Though there may be pushback or resistance, the march of technological progress is inevitable. Don't just blindly embrace it however, understand what the limitation is and how to leverage it. Don't take my word or anyones word for it, figure it out youself.

So where are we now? The emerging application layer.

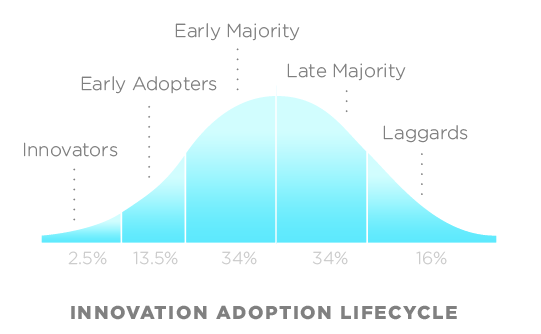

In software development, the application layer is a crucial stage in the adoption of a technology. It's the point at which the technology moves beyond early adopters and gains broader acceptance among the general population. At this stage, the technology is often more user-friendly and accessible, making it easier for a wider audience to use and benefit from it.

don't be a laggard...

You can think of it this way:

The internet -> web browser -> application

Ethereum -> rollups -> applications

Machine learning models -> apis -> applications

It took the from the birth of Netscape to Facebook 11 years.

It took from bitcoin to useable dapps almost 11 (2009 - 2018 DeFi). Yes, yes, BTC is usable - but it's def not ready for worldwide usage, but with rollups I believe we will get there. (We will dive into ZK and that emerging space in other posts.)

While LLMs have been in existence for some time, it wasn't until the release of the GPT API that individuals outside the machine learning field began developing applications using this technology. Interestingly, GPT-3, the underlying technology that powers ChatGPT, had been available for around six months before the creation of ChatGPT. OpenAI had noticed that no one was utilizing this technology, and as a result, they decided to create ChatGPT themselves. This move ignited an AI arms race.

At Bibliotheca DAO are now using GPT along with open source tools like langchain and gptIndex to create experiences:

- Forever story telling bot within discord players can interact with

- Generative Battle report from on-chain (Realms: Eternum) events streaming to the client.

- In game advisor (ChatGPT clone) that talks contextual game updates to you by reading the postgres db - twitter

It took text2img even less time to reach escape velocity. We have been on the forefront constantly chipping at the heels of the latest technology. In one year we went from:

to

Full credit to Dham who created both these images and introduced us to diffusion models before they were cool.

So we are here now. Where do we go next?

Where will we be in 12 months? The creators of Midjourney believe that there will be at least six more boosts in fidelity before we start to plateau. This is not to mention the progress made by Hugging Face in advancing the open-source space. If I had to bet, I would say that Hugging Face will be one of the most influential sites of the next decade, much like Linux was. Hugging Face is to open-source ML what Linux was to code. Eventually, these models will only cost the electricity to run as commoditization becomes more widespread.

How we are leveraging text2img

Midjourney still has not exposed their API publically so it's not really usable in production for generation within games, only for static images. Luckily there are some great new applications, one notable called Scenario. This allows anyone to use stable diffusion to create custom images and they just made their API available!

We are currently running a DAO drive to train a custom stable diffusion model on Midjourney images, which we will use in the DAOs upcoming game, Realms: Adventurers. This model will enable players to create unique avatars for their characters. Using open-source tools, we have designed an end-to-end pipeline for this project:

1. Create MJ image in discord ->

2. Crawl channel with bot ->

3. Train model ->

4. Call model via discord and show generated image

Once our custom stable diffusion model is fully operational and pipeline running, it will be capable of generating unique Realms characters indefinitely. We plan to continually improve the model by using the generated images to enhance the training set, further increasing its accuracy and quality. This iterative process will allow us to continually refine and perfect the model, opening up exciting new possibilities for our game and other creative projects.

This is really just stratching the surface of what is possible now...

If you want to get into the weeds and start developing with us - reach out to me on Twitter.

The next article I will explain how we are leveraging the vector space for eternal gaming experiences.